こんにちは、HDEの楠本です。

皆さんはKubernetesというソフトは使ったことありますか?ざっくり言うと、コンテナをクラスタ化するためのプラットフォームです。では、使ったことある方はどこで動かしていますか?iDCのサーバ?AWS?

本記事では、Kubernetesではなく、KubernetesをAzureで動かすためのコンテナサービス AKS についてお話したいと思います。

AKSとは

AKSとは、Azure Container Services という、マネージドのコンテナサービスです。

azure.microsoft.com

コンテナとは何かについては今回の本題ではないので各自で検索してほしいと思いますが、コンテナの環境を用意して実際に扱うのは結構大変です。そこで、その難しい管理部分をAzureにおまかせして、コンテナ化されたアプリケーションを迅速かつ簡単にデプロイおよび管理できるようにするためのサービスがAKSです。

AKSの歴史

Container ? C ? ACSではないの?それはその通りですが、今は AKS が正解です。

まず、Microsoft は2015年にマネージドコンテナサービスとしてACSの提供を開始しました。この時はACSです。

その後、コンテナ界隈(?)で、Google謹製のコンテナをクラスタ化して運用するためのオープンソースプラットフォーム Kubernetes に人気が集まってきます。Microsoft もこの流れに乗った方がいいという判断になったのようで、2017年4月に Kubernetes 関連のツールを開発していた Deis という会社を買収します。

そして、2017年10月、Microsoft は ACS で Kubernetes の正式サポートを発表し、名前も AKS に変更しました。略称は AKS ですが、正式名称は Azure Container Services です。Azure Kubernetes Services になったというわけではありません。

ついでに、Kubernetesとは

詳細については割愛しますが、Kubernetes はGoogle謹製のコンテナをクラスタ化して運用するプラットフォームです。

kubernetes.io

コンテナをクラスタ化するには、自動デプロイやスケーリングなどが必要になるため、全て手動で運用するには負荷がかなり高いです。そこで、それらの運用をなるべく自動化出来るようにするための機能が提供されています。

ちなみに、「Kubernetes」という単語を見て恐らくはっきりした読み方が出てこなかったのかのではないかと思いますが、読み方も人によって「くべるねいてす」や「くーべねてす」「くーばーねいてす」など違っていて、私は最初の読み方で読んでいます。

また、単語自体長いので「K」から「s」までの間に8文字あることから、k8s と略されています。i18n と同じやり方ですね。

AKSを使う利点

まず第一には、AKS自体の利用やKubernetesのクラスタには料金がかからないというところです。VMやグローバルIPなど純粋に使用したリソース分のみ料金がかかります。

次に、何と言ってもKubernetes自体の管理が簡単になるということです。

Kubernetes自体はとても強力なツールですが、いざ運用しようとするとそれは決して簡単ではありません。AKSではそういった管理が色々自動・簡略化されており、自動アップグレード、スケーリング、自己修復、などを複雑は操作なしに利用することが出来ます。

また、masterノードもAzure側で管理されて自分で管理する必要がないため、アプリケーションの開発に注力出来るというのも魅力です。

実際に使ってみます。

では、実際に使ってみたいと思います。

環境構築

まずは環境のセットアップから始めます。

環境はLinuxマシンが手元にあるものとして進めます。WindowsやMacでもおおむね同様にセットアップ出来ますが、ツールのインストールなどに関しては若干異なりますので、そのあたりは各自で調べて頂ければと思います。

なお、私はAzure上にUbuntuのVMを1台作って検証しました。

azコマンドのインストール

まずは、Azureのコマンドラインツールazコマンドをインストールします。

# pip install azure-cli

azコマンドはPython製なので、pipコマンドでインストールします。

pipが入っていない人は頑張ってインストールして下さい。

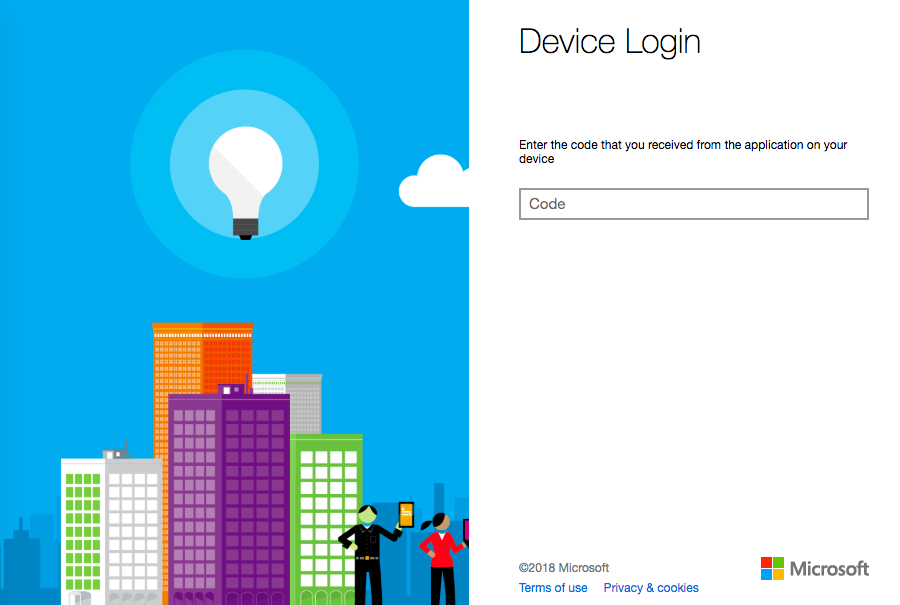

Azureへログイン

azコマンドでAzureへログインしておきます。

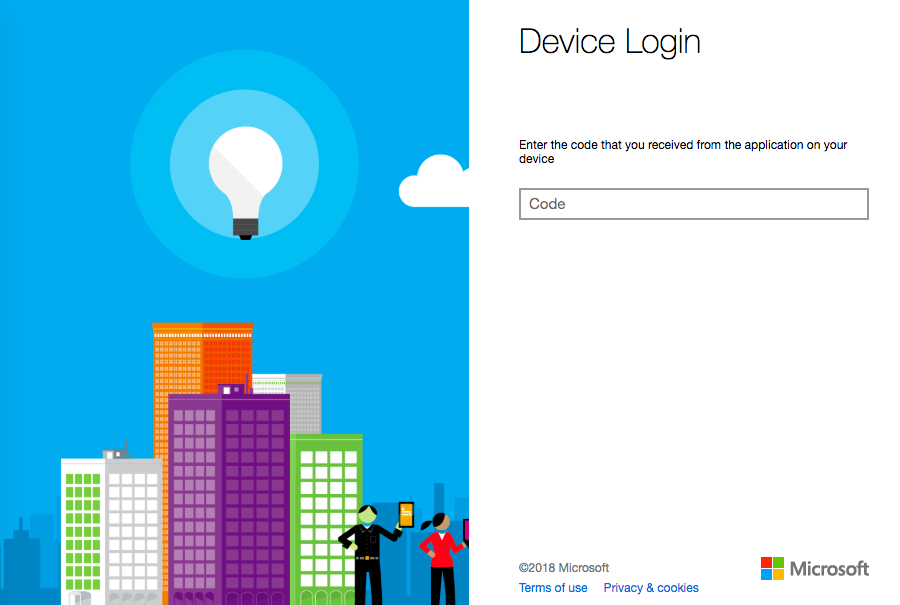

# az login

To sign in, use a web browser to open the page https://microsoft.com/devicelogin and enter the code AABBCC999 to authenticate.

[

{

"cloudName": "AzureCloud",

"id": "99999999-8888-7777-6666-555555555555",

"isDefault": true,

"name": "Microsoft Azure Enterprise”,

"state": "Enabled",

:

※IDやコードは適当なものに置き換えています

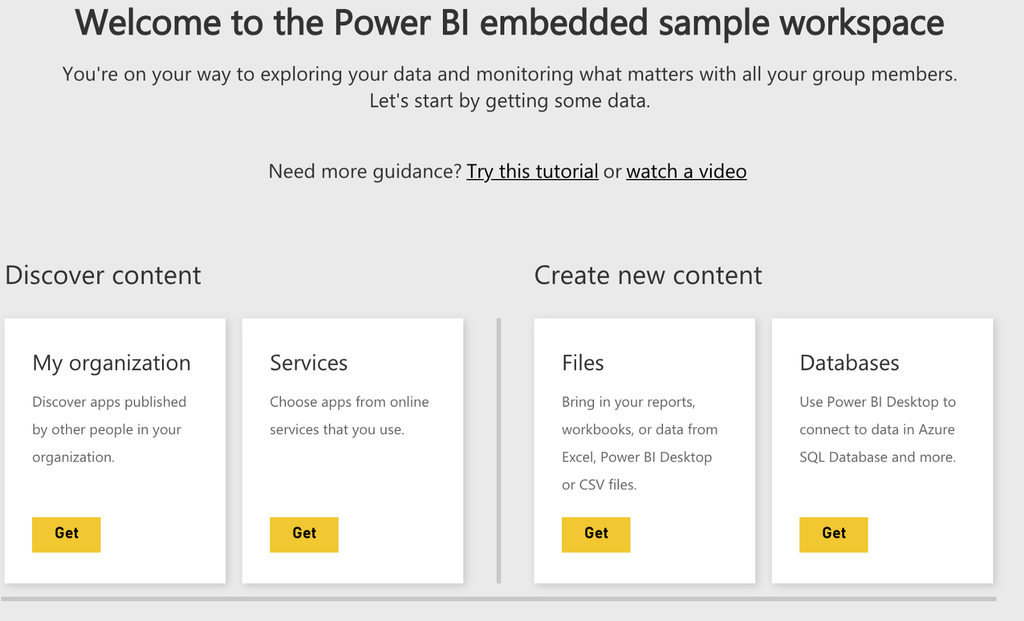

GUIが使える環境であればブラウザが立ち上がりますし、使えない環境であっても表示されているURL https://microsoft.com/devicelogin にアクセスすると下記ページが開きますので、一緒に表示された認証コード(例:AABBCC999)を入力すればログイン完了です。

一度上記作業をすることによって、そのマシンが認証されますので次回以降ログインの必要はありません。

Azureサブスクリプションなどの設定

使用するアカウントが複数のサブスクリプションを持っている場合、以下のコマンドで使用したいサブスクリプションを指定しておいて下さい。指定しない場合、先の az login で表示された最初のサブスクリプションが使われます。私もはじめは意図しないサブスクリプションにリソースが作成されてしまい少し嵌りました。

# az account set --subscription "Microsoft Azure Enterprise"

ここでは、Microsoft Azure Enterprise というサブスクリプションを指定しています。

リソースグループがまだない場合は以下のコマンドで作成しておいて下さい。後ほどのコマンド実行時に指定します。既にある場合は作成は不要です。

# az group create -n kusumoto-dev -l eastus

ここでは、リソースグループkusumoto-devをeastus(米国東部)に作成しています。

kubectlのインストール

Kubernetesのコマンドラインツール kubectl コマンドをインストールします。

# az aks install-cli --install-location ~/bin/kubectl

なお、azコマンドはAzure全体のコマンドラインツールでAKS専用ではありませんので、AKS関連の操作をする場合には aks オプションを合わせて指定します。

これでセットアップは完了です。

それでは実際にAKSを使ってみましょう。

クラスタの作成

クラスタはaz aks createコマンドで作成します。このコマンドでAzure上にVMを作ったりするので少し時間がかかります。15分くらいかかりました。

# az aks create -n k8stest -g kusumoto-dev -l eastus --kubernetes-version 1.7.7 --generate-ssh-keys

{

"additionalProperties": {},

"agentPoolProfiles": [

{

"additionalProperties": {},

"count": 3,

:

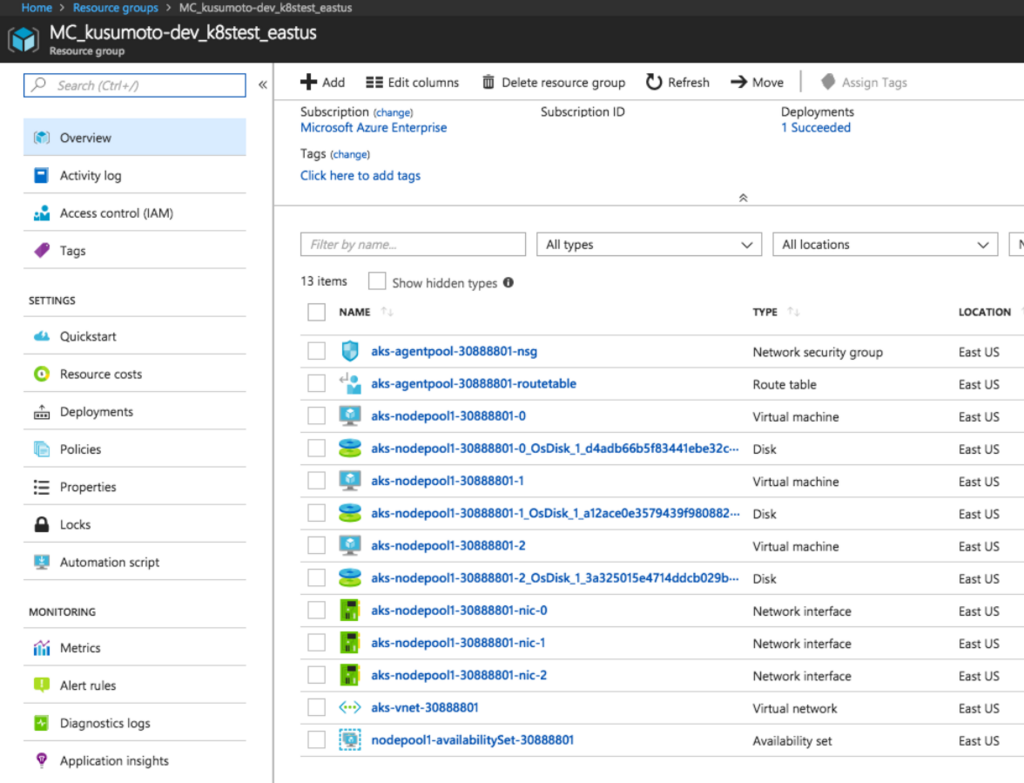

リージョン eastus の リソースグループ kusumoto-dev に k8stest というクラスタを作成しました。

クラスタの作成はこれで完了です。VM作って、ノード作って、pod作って、という作業は必要ありません。

作成されたクラスタを見てみましょう。

まずは、azコマンドで。

# az aks list -o table

Name Location ResourceGroup KubernetesVersion ProvisioningState Fqdn

------- ---------- --------------- ------------------- ------------------- ---------------------------------------------------------

k8stest eastus kusumoto-dev 1.7.7 Succeeded k8stest-kusumoto-dev-aaaaa-00000000.hcp.eastus.azmk8s.io

-oオプション表記させるためのオプションです。

k8stestクラスタが作成されていることが確認できます。

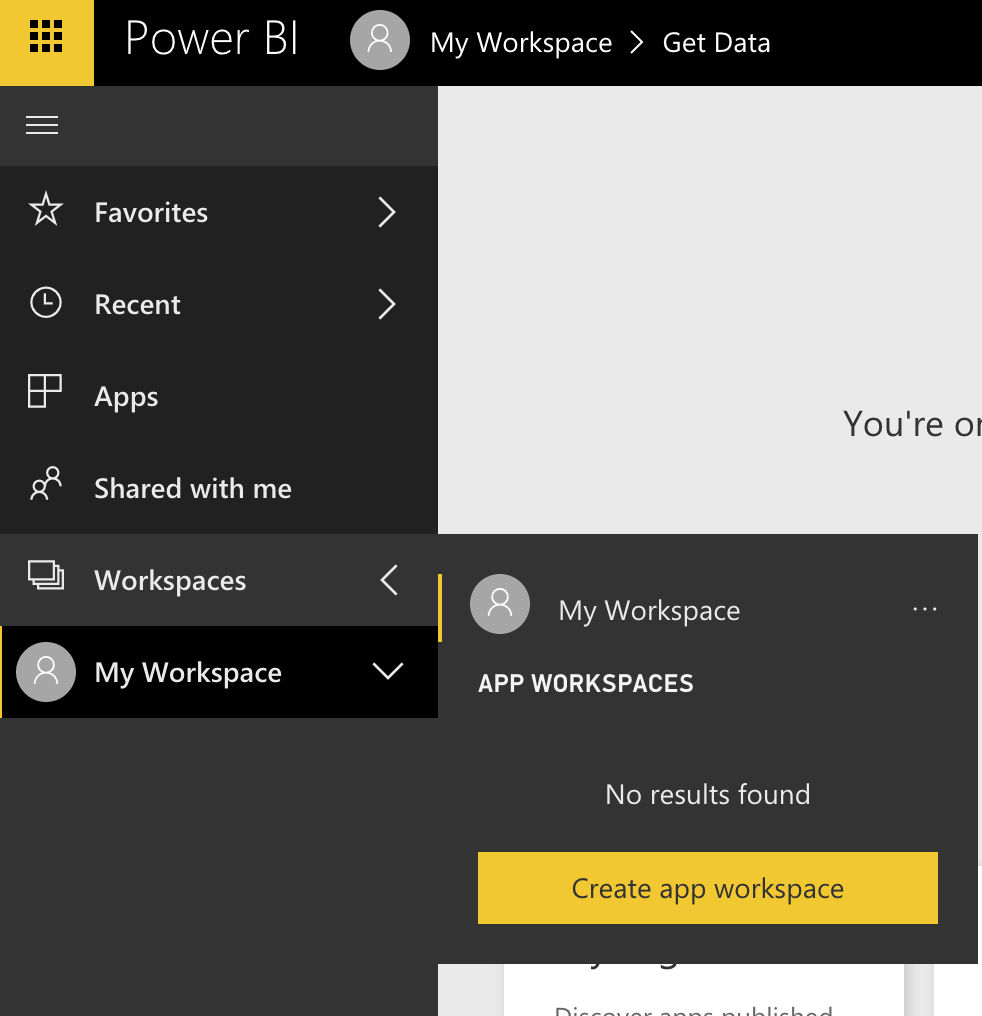

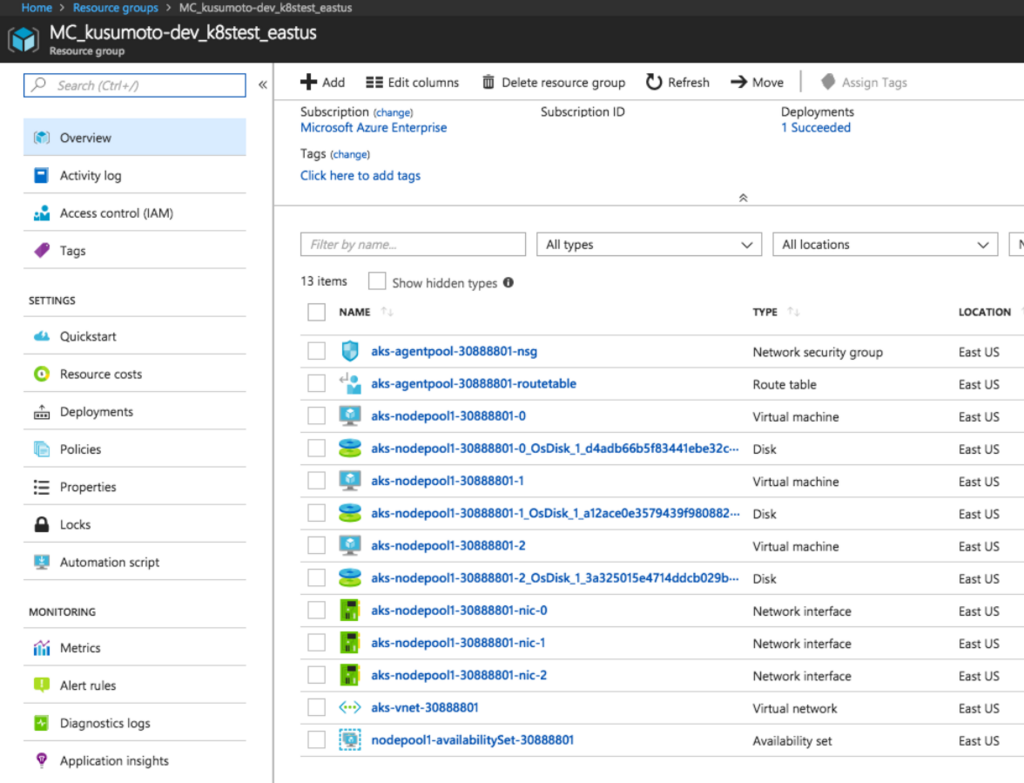

では、次にAzureポータルから見てみましょう。

サービス公開

では、次に kubectl コマンドも使って作成したサービスを公開してみましょう。

証明書の取得

まずは、作成したクラスタの証明書を取得する必要があります。

# az aks get-credentials -n k8stest -g kusumoto-dev

Merged "k8stest" as current context in /root/.kube/config

このコマンドはクラスタごとに1回だけ実行しておけばよいです。

Webコンテナの作成

では、実際にWebコンテナを作成して公開してみましょう。

アクセス確認の意味も含めてノード一覧を見てみましょう。

# kubectl get nodes

NAME STATUS ROLES AGE VERSION

aks-nodepool1-30888801-0 Ready agent 42m v1.7.7

aks-nodepool1-30888801-1 Ready agent 42m v1.7.7

aks-nodepool1-30888801-2 Ready agent 42m v1.7.7

確かに、3ノード出来ています。

ノード数を増やしたい場合(スケールアウト)は、az aks scale を使います。これは後ほど説明します。

Docker Hub より nginx のイメージを取得&実行。

# kubectl run mynginx --image=nginx:1.13.5-alpine

deployment.apps "mynginx" created

コンテナ(Pod)が出来ているか確認。

# kubectl get pods

NAME READY STATUS RESTARTS AGE

mynginx-1485169511-1pjds 1/1 Running 0 5s

確かに出来ています。

ノードやPod(コンテナの概念)の説明は省きますが、これだけでnginxの環境が出来ています。

あとは、外部に公開するための設定です。

80ポートを指定して公開します。

# kubectl expose deploy mynginx --port=80 --type=LoadBalancer

service "mynginx" exposed

公開されているか見てみましょう。

# kubectl get services

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

kubernetes ClusterIP 10.0.0.1 <none> 443/TCP 58m

mynginx LoadBalancer 10.0.141.176 11.22.33.44 80:31310/TCP 3m

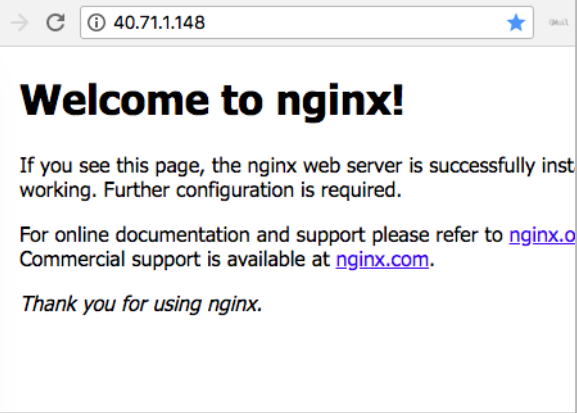

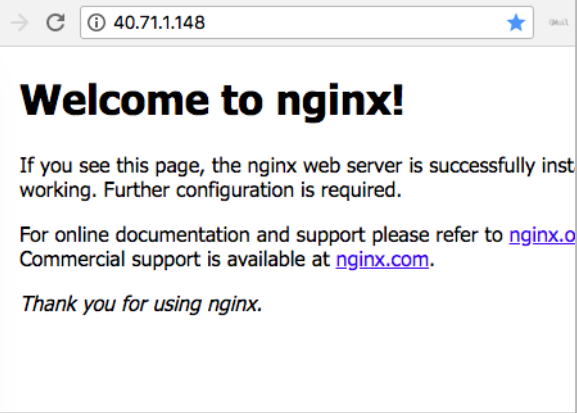

EXTERNEL-IP がグローバルIPです。kubectl expose 実行直後はまだIPが付いていないこともありますが、少し待てば自動的に付与されます。これだけでもう公開出来たので、ブラウザで http://40.71.1.148 とアクセスすれば Welcome to nginx! のページが開きます。

実際に運用するとなると、ドメインを取得したり、HTTPSの設定をしたり、セキュリティの設定をしたり、とやらないと行けないことはまだまだありますが、基本的な環境はこれで出来上がりますので、簡単です。

スケールアウト

しばらく運用してみた→アクセスが増えてきた→サイトが重くなってきた!

というわけでスケールアウトしてみたいと思います。

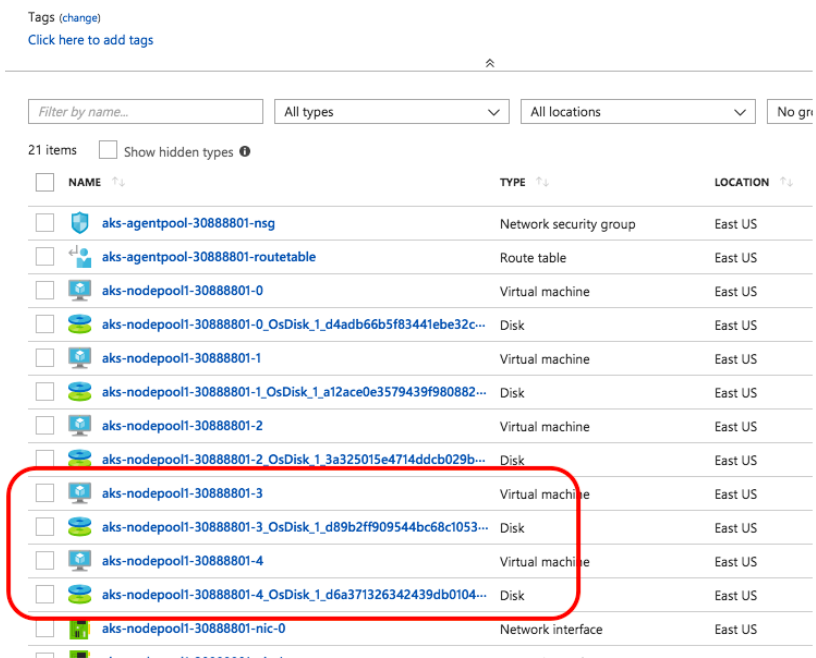

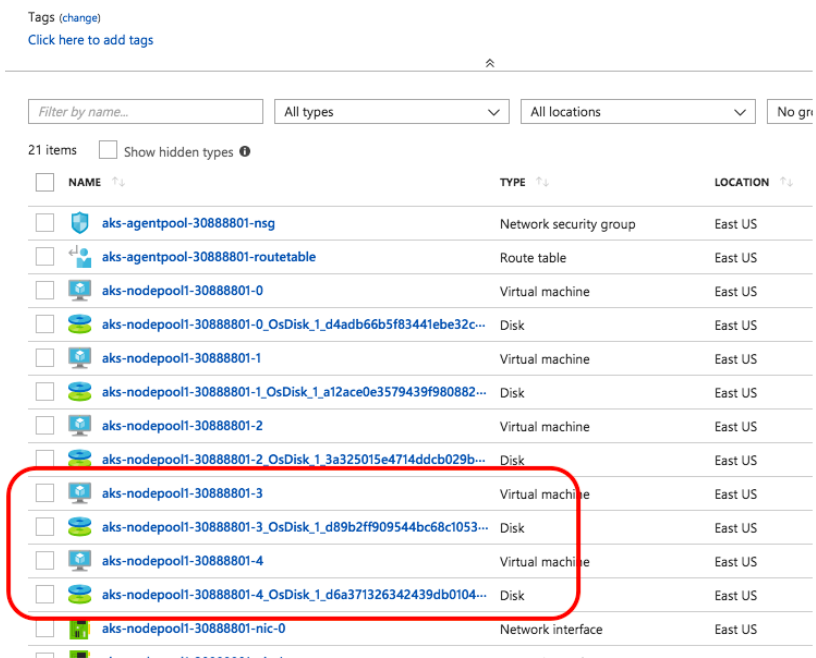

現在ノードは3つ。これを5つに増やしてみます。

実行するコマンドは以下の1つだけ。

# az aks scale -n k8stest -g kusumoto-dev --node-count=5

これだけでスケールアウト出来ます。

ノードの状態を見てみましょう。

# kubectl get nodes

NAME STATUS ROLES AGE VERSION

aks-nodepool1-30888801-0 Ready agent 1h v1.7.7

aks-nodepool1-30888801-1 Ready agent 1h v1.7.7

aks-nodepool1-30888801-2 Ready agent 1h v1.7.7

aks-nodepool1-30888801-3 Ready agent 1m v1.7.7

aks-nodepool1-30888801-4 NotReady agent 1s v1.7.7

確かに2個増えて5個になっています。

最後の1つはまだ配備が完了していませんが、しばらくしたら自動で完了します。

Azureのポータルから見ても増えていることが確認できます。

通常サーバーを増やす場合は、サーバーを構築して設置してIPつけてロードバランサに組み込んで、といったことをしないといけませんが、そのあたり全て自動で行われるので安全かつシンプルにスケールアウトすることが出来ます。

もちろん、--node-countオプションで数を減らせばスケールダウンすることも出来ます。その場合も自動で該当ノードのIPをロードバランサから削除して安全にスケールダウンされます。

クラスタの削除

クラスタを削除する場合は、az aks deleteです。

# az aks delete -n k8stest2 -g kusumoto-dev

Are you sure you want to perform this operation? (y/n): y

:

サービスが終了するのは悲しいことですが、リソースが作られたままだと課金され続けてしまいますので、不要になったクラスタは上記コマンドで消してしまいましょう。

masterノードへのアクセス

AKSを使う場合、masterノードを利用することはありませんが、それでもアクセスしたいとなった場合は以下のコマンドを実行します。

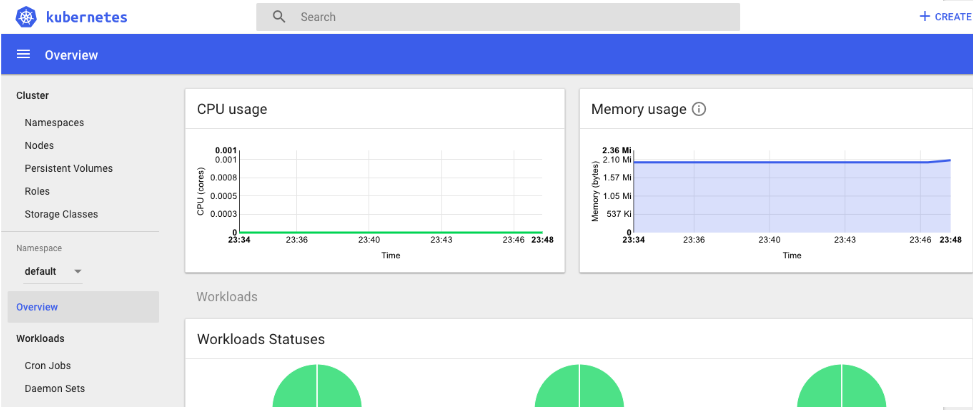

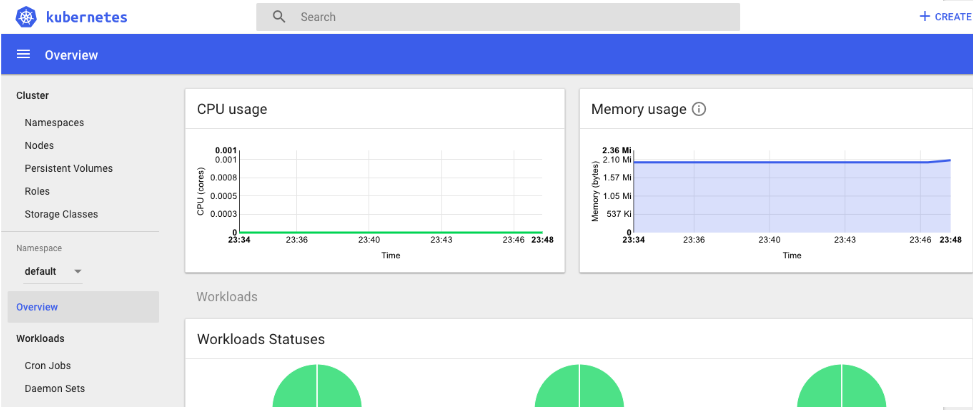

# az aks browse -n k8stest -g kusumoto-dev

Merged "k8stest" as current context in /tmp/tmpvtNG_R

Proxy running on http://127.0.0.1:8001/

Press CTRL+C to close the tunnel...

Forwarding from 127.0.0.1:8001 -> 9090

Forwarding from [::1]:8001 -> 9090

ブラウザが立ち上がりお馴染みの(?)Kubernetesの管理画面が開きます。

azコマンドをローカルのPCで実行している場合はこれでよいですが、SSHなどでリモート先の環境で実行しているなどGUIが使えない場合は、--disable-browser オプションを付けるとよいでしょう。ブラウザは立ち上がりませんので、ポートフォワードなので、そのマシンの 8001 ポートへアクセスできれば、管理画面が開けます。

なお、ポート番号は自動で8001になるようで、変更する方法は見つかりませんでした。公式のマニュアルにもありません。az aks browse は内部的には kubectl コマンドを実行しているようなので、どうしてもポート番号を変更したい場合は、kubectl コマンドで頑張る必要があるようです。

Kubernetesのアップデート

ミドルウェアに脆弱性が発見されて、アップデートしないといけないが、色々影響がありそうそう簡単にアップデートできない・・・・なんてことにならないように、Kubernetesのバージョンアップもサービス影響なく簡単に行なえます。

バージョン1.8.1にアップデートする例です。

# az aks upgrade -n k8stest -g kusumoto-dev --kubernetes-version 1.8.1

一つずつ順番にローリングアップデートされているのがわかります。

# kubectl get nodes

:

aks-nodepool1-30888801-4 Ready agent 13m v1.7.7

aks-nodepool1-30888801-5 Ready agent 35s v1.8.1 ←Create new node

# kubectl get nodes

:

aks-nodepool1-30888801-4 NotReady,SchedulingDisabled agent 14m v1.7.7 ←Stopping old node

aks-nodepool1-30888801-5 Ready agent 1m v1.8.1

# kubectl get nodes

:

aks-nodepool1-30888801-5 Ready agent 3m v1.8.1 ←Removed the old node. Upgrade DONE!

なお、Kubernetesは現時点(2018年6月)で ver.1.10 まで出ているようですが、AKSが対応しているのは ver.1.9 までの様です。

さいごに

AKSを使うことによって、Kubernetes の使用への敷居が一気に下がったのではないかと思います。今まで運用で苦労していた色々な点がこれで解消できることが期待されます。ただ、このサービス、利用できるリージョンはまだ米国のみとのことで、日本リージョンでは今は利用できません。MSの人によるとまだロードマップにも乗っていないとのことなのですが、早く日本で利用できる様になるといいですね。